Neural networks represent one of the most powerful concepts in modern artificial intelligence. These computational models, inspired by the human brain, have revolutionized how machines learn and process information. Whether you're new to AI or looking to deepen your understanding, this guide will help you grasp the fundamentals of neural networks.

What Are Neural Networks?

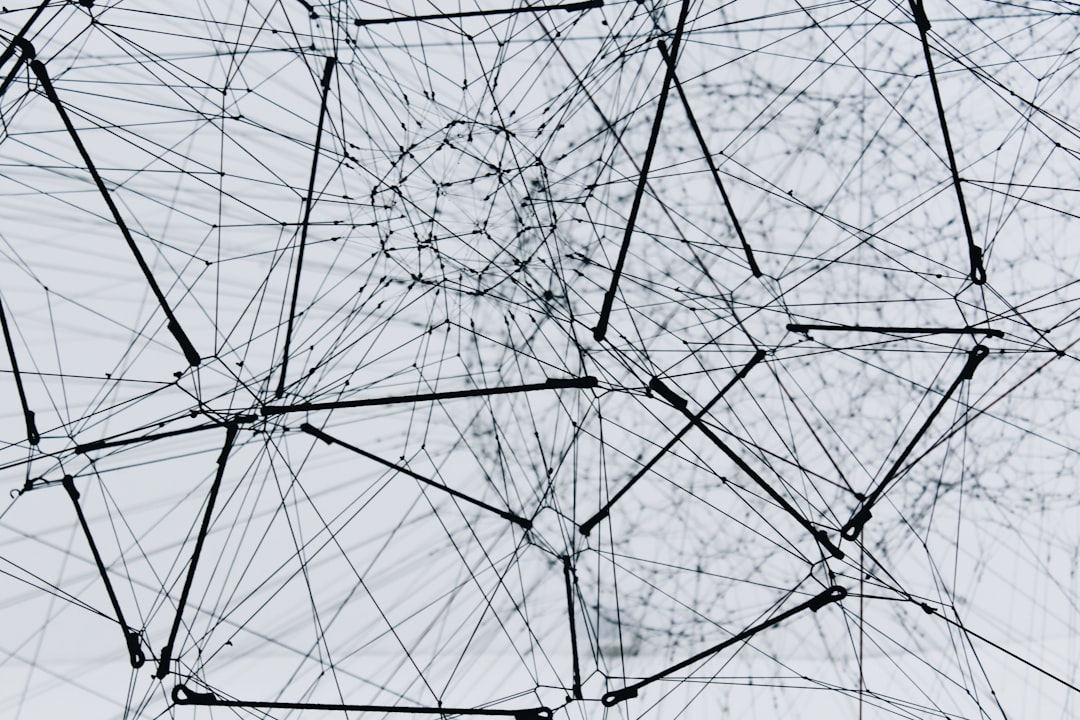

At their core, neural networks are computing systems designed to recognize patterns and solve complex problems. They consist of interconnected nodes, or neurons, organized in layers. Each connection between neurons carries a weight that determines the strength of the signal passed through the network.

The basic structure includes an input layer that receives data, one or more hidden layers that process information, and an output layer that produces results. This architecture allows neural networks to learn from examples and make predictions or decisions based on new data.

The Building Blocks: Layers and Neurons

Understanding how layers work is crucial to grasping neural networks. The input layer receives raw data, such as pixel values from an image or numerical features from a dataset. Each neuron in this layer corresponds to a specific feature or data point.

Hidden layers perform the actual computation. These layers transform input data through mathematical operations, extracting increasingly complex features. A network might have one hidden layer or dozens, depending on the complexity of the task. Deep learning refers to networks with many hidden layers that can learn intricate patterns.

The output layer produces the final result. For classification tasks, it might indicate which category an input belongs to. For regression problems, it could provide a numerical prediction. The number of neurons in the output layer depends on the specific problem you're solving.

Activation Functions: Adding Non-Linearity

Activation functions play a vital role in neural networks by introducing non-linearity. Without them, a neural network would simply perform linear transformations, severely limiting its capability to solve complex problems.

Common activation functions include ReLU, which outputs the input if positive and zero otherwise, sigmoid which squashes values between zero and one, and tanh which maps values between negative one and one. Each function has specific use cases and affects how the network learns.

The Learning Process: Training Neural Networks

Training a neural network involves adjusting weights to minimize the difference between predicted and actual outputs. This process uses an algorithm called backpropagation combined with an optimization method like gradient descent.

During training, the network makes predictions on training data, calculates the error using a loss function, and then adjusts weights to reduce this error. This cycle repeats thousands or millions of times until the network achieves satisfactory performance.

The learning rate determines how much to adjust weights in each iteration. Too high, and the network might overshoot optimal values. Too low, and training becomes unnecessarily slow. Finding the right balance is crucial for effective training.

Practical Applications

Neural networks power numerous applications in today's world. Image recognition systems can identify objects, faces, and scenes with remarkable accuracy. Natural language processing models understand and generate human language. Recommendation systems suggest products or content based on user preferences.

In autonomous vehicles, neural networks process sensor data to make driving decisions. Medical diagnosis systems analyze images to detect diseases. Financial institutions use them for fraud detection and risk assessment. The applications continue to expand as technology advances.

Common Challenges and Solutions

Working with neural networks comes with challenges. Overfitting occurs when a network learns training data too well and fails on new data. Regularization techniques and dropout layers help prevent this. Vanishing gradients can halt learning in deep networks, but modern activation functions and normalization techniques address this issue.

Computational resources pose another challenge. Training complex networks requires significant processing power, often necessitating GPUs or specialized hardware. Cloud computing platforms have made these resources more accessible to learners and developers.

Getting Started with Your First Network

Beginning your journey with neural networks doesn't require advanced mathematics or expensive equipment. Start with simple problems and gradually increase complexity. Popular frameworks like TensorFlow and PyTorch provide tools to build and train networks without implementing everything from scratch.

Practice with well-known datasets like MNIST for digit recognition or CIFAR-10 for image classification. These benchmarks help you understand concepts while providing immediate feedback on your progress. Online courses and tutorials offer structured learning paths with hands-on exercises.

The Future of Neural Networks

Neural networks continue to evolve rapidly. Researchers develop new architectures that solve previously intractable problems. Transformers revolutionized natural language processing. Graph neural networks handle complex relational data. Generative models create realistic images, text, and audio.

As hardware improves and algorithms become more efficient, neural networks will tackle increasingly sophisticated challenges. Understanding their fundamentals positions you to participate in this exciting field and contribute to its advancement.

Whether you're pursuing a career in AI or simply curious about how machines learn, neural networks offer endless opportunities for exploration and innovation. The journey from understanding basic concepts to building sophisticated models is challenging but rewarding. Start with the fundamentals, practice consistently, and watch your skills grow as you unlock the potential of artificial intelligence.